For the past few years, I’ve been hearing the same narrative. AI is moving at an unprecedented speed. It will replace humans. It might even become uncontrollable. But the more I look at how technology actually integrates into society, the less convincing that story feels.

There’s another way to see this. Not as a sudden rupture, but as a continuation. AI may be powerful, but that doesn’t make it exceptional in how it spreads. Like electricity or the internet, its real impact is likely to come through gradual adoption, not overnight disruption.

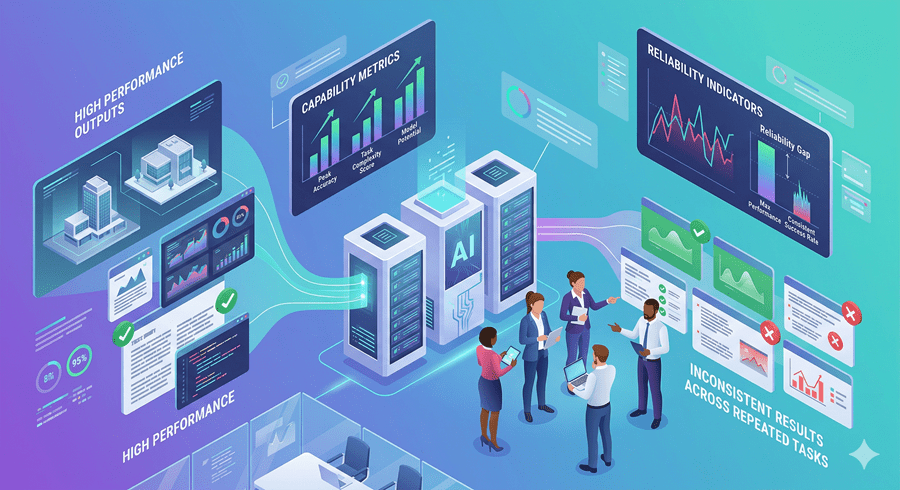

Capability Isn’t the Same as Reliability

One of the most overlooked gaps in AI discussions is the difference between what systems can do and what they can consistently do.

It’s easy to point to benchmarks and say machines perform at or above the human level in certain tasks. But real-world use demands reliability. Can the system give the same accurate answer every time? Does it know when it’s wrong? Can it handle edge cases without breaking?

Without reliability, capability doesn’t translate into replacement. That’s why many jobs that seem “automatable” on paper remain stubbornly human in practice.

Productivity Creates More Work, Not Less

There’s an assumption that if AI makes work faster, it reduces the need for workers. But historically, the opposite tends to happen.

When I can answer questions faster, I don’t run out of work. I generate more of it. Each solved problem opens up new ones. Productivity expands the scope of what’s possible, and that expansion often increases demand for skilled people rather than eliminating it.

This pattern shows up repeatedly across industries. Technology shifts the nature of work upward rather than removing it entirely.

Why Adoption Takes Time

Even when the technology is ready, integration is not simple. Businesses don’t just plug AI into their systems and move on. There are legal risks, operational changes, and accountability questions to resolve.

If an AI system makes a mistake, who is responsible? That question alone slows adoption in high-stakes fields like healthcare, law, and customer service.

Regulation also plays a role. Many constraints exist for good reason. They reflect past failures and are designed to prevent new ones. These guardrails naturally limit how fast AI can spread into critical domains.

Between Hype and Reality

There are real risks associated with AI, but treating them as one monolithic threat doesn’t help. Specific problems require specific solutions. Security risks, bias, and misuse are all different challenges, and each needs its own approach.

At the same time, predictions about runaway superintelligence assume a level of certainty that simply doesn’t exist. The future is not something we can model perfectly, whether using humans or machines.

What feels more plausible is a middle path. AI will keep improving. It will reshape industries. But it will do so unevenly, gradually, and within the same economic and social constraints that shaped every major technology before it.

That might not be as dramatic as the headlines suggest. But it’s far more consistent with how the world actually works.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube