The Core Problem OpenAI Is Facing

OpenAI has built some of the most advanced AI systems in the world, but there is a structural limitation it cannot ignore. No matter how capable ChatGPT becomes, it still runs inside mobile ecosystems controlled by Apple and Google.

On devices powered by Apple Inc. and Google, every AI interaction is constrained by operating system rules. Apps are sandboxed, permissions are restricted, and cross-app automation is tightly controlled. This means even highly capable AI agents cannot freely act across a user’s digital life.

That gap between intelligence and execution is the central problem OpenAI is trying to solve.

The Reported AI Phone Strategy

According to industry reporting, OpenAI is working with chipmakers such as MediaTek and Qualcomm to design processors optimized for AI agent workloads. Manufacturing is reportedly linked to Luxshare Precision, a major global device assembler.

The timeline being discussed suggests a staged rollout:

Chip design finalization around 2026 to 2027

Hardware readiness and ecosystem preparation

Possible mass production around 2028

This indicates a long-term plan rather than a quick consumer gadget experiment. It is closer to platform building than product launch.

Why an AI Native Phone Matters

The reason this matters goes beyond hardware. It is about how computing is structured.

Today’s smartphones are app-centric. Users:

Open apps manually

Switch between services

Copy information across platforms

Re-enter data multiple times

Even with AI assistance, the system is still fragmented.

An AI native phone would attempt to reverse that structure. Instead of apps being the primary interface, the AI becomes the main control layer. Users would express intent, and the system would decide how to complete tasks across apps and services.

For example:

Booking travel would not require opening multiple apps

Payments could be handled through integrated systems

Scheduling and communication could happen automatically

The device would act less like a phone and more like an autonomous digital assistant.

Hardware and Ecosystem Control

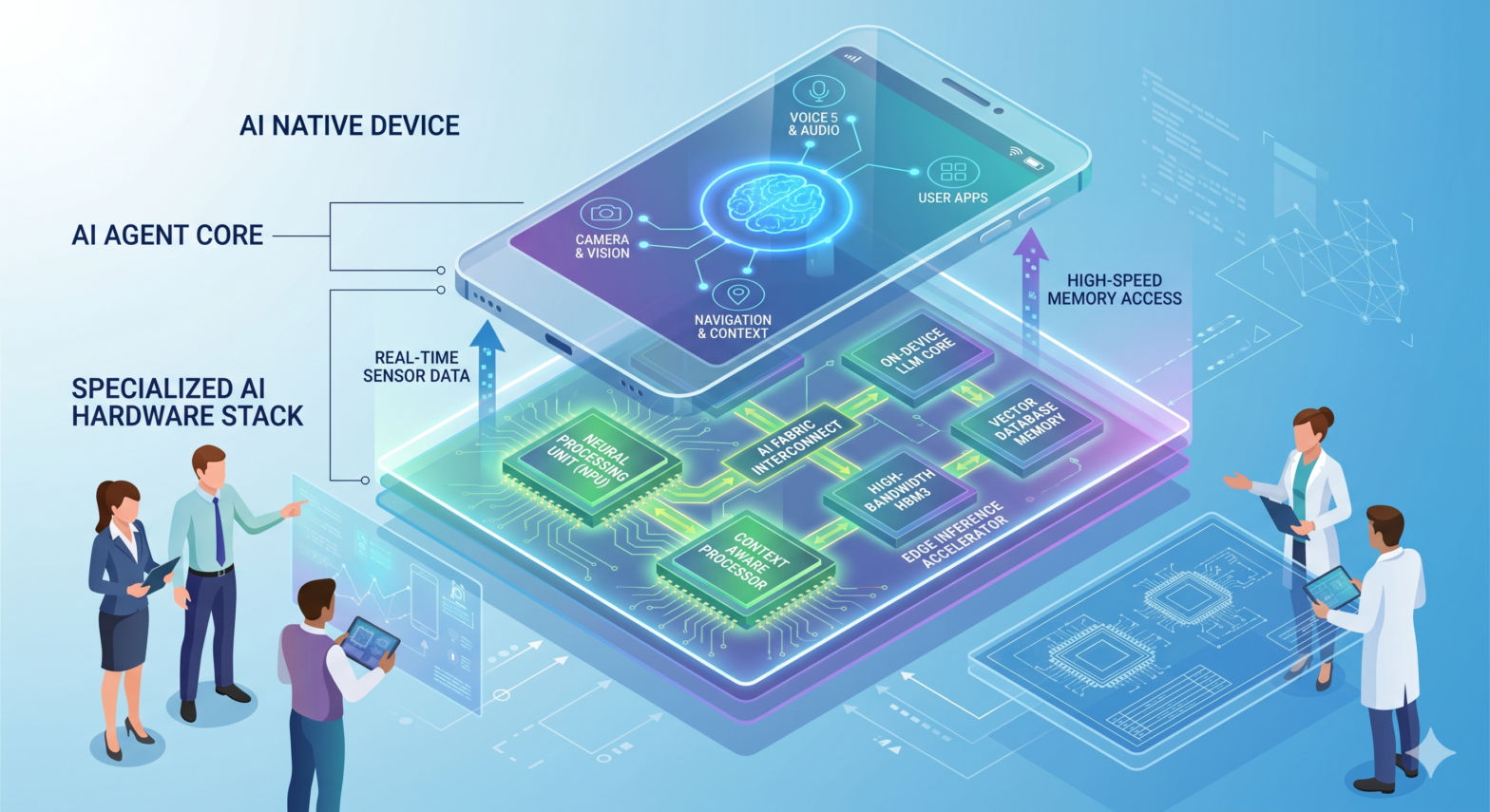

To achieve this, OpenAI would need deeper control over hardware and software integration than current mobile platforms allow.

Reports suggest OpenAI has assembled a hardware team with around 200 people, including former Apple design talent connected to Jony Ive. The involvement of experienced consumer hardware engineers signals that this is not just a software expansion but a full device strategy.

The rumored ecosystem includes:

AI smart speakers as entry devices

AI headphones for continuous assistance

Smart glasses for ambient computing

Pocket-sized AI devices

A long-term smartphone replacement

Each device would act as a different interface layer for the same underlying AI system.

Why Current Phones Are a Bottleneck

Modern smartphones were not designed for autonomous AI agents. Their architecture prioritizes:

App isolation for security

User-controlled permissions

Manual interaction flows

Limited background automation

This design protects users but restricts AI autonomy.

Even advanced assistants cannot fully coordinate across apps without user intervention. That limitation becomes more visible as AI systems become more capable of planning and reasoning.

Global Competition in AI Devices

OpenAI is not the only company moving in this direction. In China, companies are experimenting with AI-first smartphones using different strategies.

One example is the collaboration between ByteDance and ZTE on the Dubao AI phone concept. Instead of deep OS integration, it uses a visual automation approach where AI interacts with apps through the interface itself.

This allows rapid functionality but introduces challenges such as:

Security restrictions from major apps

Payment system incompatibilities

Platform blocking risks

Stability concerns

The contrast is clear. Some companies are modifying existing ecosystems, while others are attempting to build entirely new ones.

The Strategic Stakes Behind AI Phones

The importance of AI phones is not just technological. It is structural.

Control over the device means control over:

The user interface layer

Data access and context flow

Application coordination

Digital service distribution

If AI becomes the primary interface, whoever controls that layer effectively controls how users interact with the entire digital economy.

This is why semiconductor companies, manufacturers, and design studios are being integrated early into planning. The goal is not just to build a device, but to build an ecosystem.

The Shift From Apps to Agents

The broader shift can be described simply. Computing is moving from:

App-driven interaction

Intent-driven interaction

In the app model, users do the work of coordination. In the agent model, AI systems handle coordination across tools and services.

That transition reduces friction but increases dependence on the AI layer itself.