A few months ago, the AI race felt relatively simple.

Labs competed primarily on model intelligence: benchmark scores, reasoning ability, coding performance, and context length. The assumption was that the best model would naturally win users. That assumption is breaking down fast.

What we are watching now is not just a competition between models. It is a battle over workflows, developer ecosystems, and long-term user retention, and that changes everything.

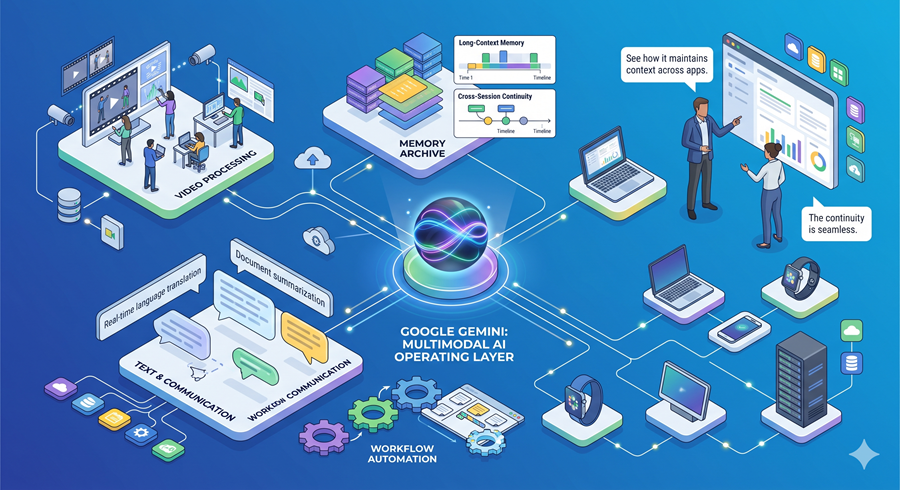

Google Is Betting on Multimodal Infrastructure

Ahead of its developer conference, Google appears to be preparing a much broader push around Gemini.

The leaked direction is revealing: stronger video generation, improved memory systems, long-context workflows, SVG-native generation, and tighter multimodal integration across products.

None of these features matters in isolation. Together, they point toward something larger: Google wants Gemini to become a persistent operating layer rather than just a chatbot.

That distinction matters.

The more context AI systems retain across projects, conversations, media types, and workflows, the harder they become to replace. Switching costs rise naturally once models begin understanding not just prompts, but ongoing user behavior.

Memory may quietly become one of the most important competitive advantages in AI.

Coding Models Are Becoming Creative Engines

One interesting signal is how much attention developers are paying to SVG generation.

At first glance, generating detailed graphics entirely through code feels niche. But structurally, it reveals something important about model capability. Producing high-quality SVG output requires planning, spatial reasoning, structured generation, and reliable code execution simultaneously.

It is not really about circles or illustrations.

It is about whether models can consistently translate abstract visual intent into executable systems. That ability extends far beyond design tasks.

The Real Competition Is Workflow Capture

At the same time, OpenAI appears to be aggressively targeting developer workflows through Codex.

What stands out is not just model quality improvements, but the migration strategy itself. Importing configurations, preserving environments, onboarding non-developers, and integrating external workflows all point toward platform consolidation.

This is the same playbook successful software ecosystems have always used.

Once users build habits, configurations, project histories, and operational systems around a platform, leaving becomes increasingly expensive psychologically and technically. The AI assistant stops being a tool and starts becoming infrastructure.

Anthropic’s Problem Isn’t Intelligence

What makes this particularly interesting is that Anthropic’s challenge does not appear to be model quality alone. The larger issue is usability under scale.

Rate limits, workflow friction, and infrastructure constraints matter enormously once AI becomes integrated into professional development environments. Developers optimize around reliability as much as intelligence. A slightly weaker system with smoother execution often wins real adoption.

That is why infrastructure and product design increasingly matter just as much as raw capability.

The Industry Is Entering the Ecosystem Phase

The broader pattern is becoming obvious.

Google is pushing multimodal persistence. OpenAI is pushing workflow ownership. Anthropic is pushing autonomous agent systems. Everyone is racing to become the default interface between humans and digital work.

The next phase of AI competition will probably not be decided solely by which lab builds the smartest model.

It will be decided by which ecosystem users build their professional lives around before switching becomes difficult, and that race has already started.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube