Most AI product updates are incremental. A few new features, a slightly better model, a cleaner interface. Useful, but rarely transformative. This Gemini release feels different because it changes what the product is becoming.

Google is no longer positioning Gemini as a chat tool. It is turning it into a persistent work layer embedded across daily workflows. That is a much bigger move than shipping another assistant.

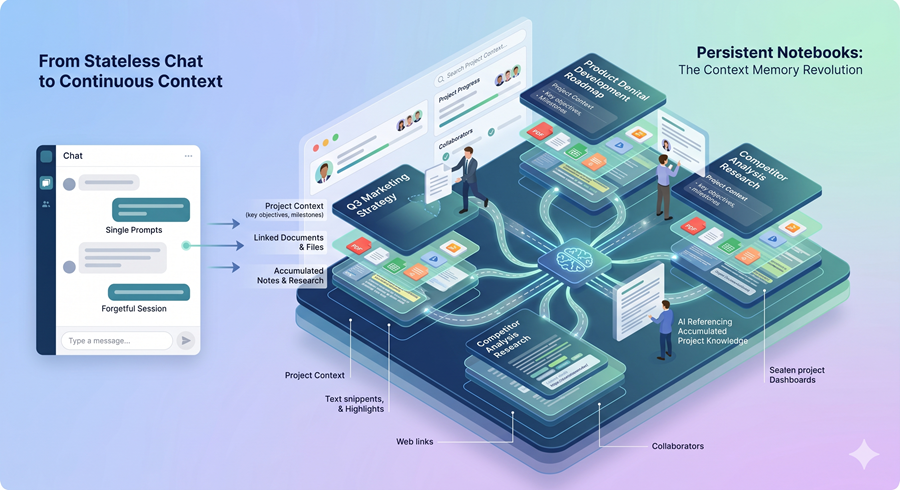

Notebooks Change the Core Behavior of AI

The most important update is notebooks. Until now, most AI systems have been stateless. You ask a question, get an answer, and start over the next time. That model works, but it creates friction. Context has to be rebuilt in every session.

Notebooks change that dynamic. Instead of prompting from scratch, I can now anchor work inside persistent project context: notes, documents, research, and operating assumptions. The system responds from accumulated knowledge, not isolated prompts.

That turns AI from reactive software into cumulative infrastructure. Over time, the output becomes less generic and more operationally useful because the system is working from actual context, not reconstructed memory.

Google Wants Gemini to Produce Work, Not Just Answers

The second major shift is file generation. This matters because it moves Gemini from idea generation into execution.

Generating a useful response is one thing. Producing a finished document, spreadsheet, slide deck, or PDF inside the same workflow is something else entirely. That closes the gap between asking for help and shipping work.

This is where most AI tools still break. They generate content, but the user still has to format, structure, export, and operationalize it. Google is removing that friction.

That is how AI becomes part of real work instead of an extra step around it.

The Real Strategy Is Ecosystem Depth

The broader pattern matters more than any single feature. Persistent notebooks, app-level access, file generation, workspace integrations, and personalized context all point in the same direction: Google is building Gemini as infrastructure inside its existing software stack.

That gives it a strategic advantage most competitors cannot replicate. Google does not need to convince users to adopt an entirely new workflow. It only needs to make Gemini useful inside the workflows people already use.

That is a much easier distribution problem to solve.

Why This Shift Matters More Than the Model Race

The long-term AI winners will not be defined by model quality alone. They will be defined by how deeply they integrate into everyday work.

That is what makes this update important. Gemini is becoming less of a destination and more of an operating layer: persistent, connected, and increasingly built into the systems people already rely on.

That is how AI stops being a tool people visit and starts becoming infrastructure they work through.