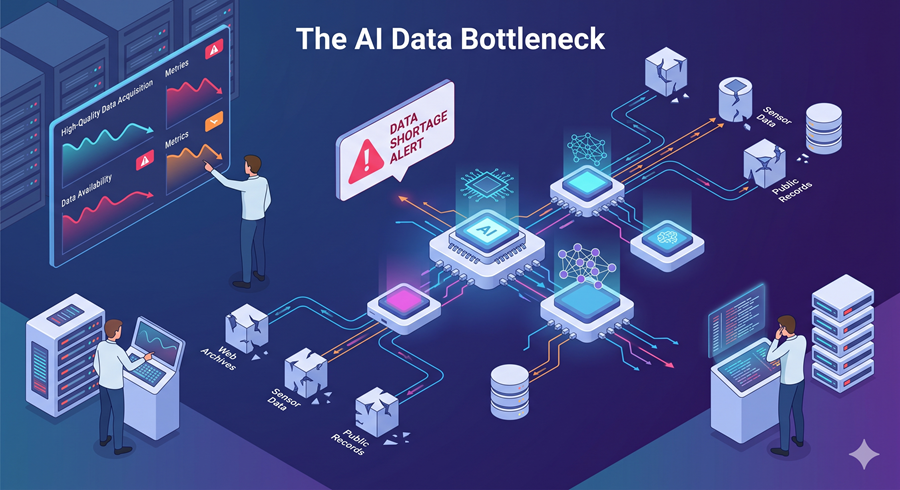

I keep hearing conversations about bigger models, faster chips, and more compute. But the real bottleneck in AI right now feels much simpler and far more dangerous. We are running out of good data.

Why the Internet Is No Longer Enough

For years, AI has relied on the internet as its primary training ground. Text, images, code, and conversations were scraped at scale and fed into models. That approach worked incredibly well for building general-purpose systems.

But now the problem is changing. The next phase of AI is not about general knowledge. It is about specialized intelligence like cybersecurity, legal reasoning, and medical decision-making. And the data required for those domains is either scarce, expensive, or locked behind strict regulations.

This creates a ceiling. No matter how powerful models become, they cannot learn what they do not have access to.

Synthetic Data as a Designed System

This is where synthetic data starts to matter. Instead of collecting information from the real world, systems are beginning to generate their own training data. But the real shift is not just generation. It is designed.

Rather than randomly producing examples, newer approaches start by mapping an entire domain. They break it down into structured categories, subcategories, and relationships. From there, data is generated with intention, not guesswork.

This solves a major issue in AI training known as repetition. Models tend to produce similar outputs over time, missing rare but important cases. By designing the data space first, these systems ensure coverage across both common and edge scenarios.

Controlling Complexity Without Losing Quality

Another layer that stands out is the ability to control difficulty. Data is no longer uniform. It can be adjusted to include more nuanced, complex, or realistic scenarios.

This matters because real-world problems are rarely simple. Training on shallow examples limits performance. But increasing complexity introduces risk. If the system generating the data is flawed, those flaws get amplified.

To address this, validation becomes critical. Instead of assuming correctness, newer methods actively test both sides of an answer, asking whether something is right and whether it might be wrong. This reduces the tendency of AI to accept plausible but incorrect outputs.

From Data Collection to Data Engineering

What I find most interesting is how this changes the competitive landscape. For a long time, the advantage in AI was access to more data. The bigger your dataset, the better your model.

Now that advantage is shifting. It is becoming less about who has the most data and more about who can design the best data. That is a completely different skill set. It turns data into an engineering problem rather than a collection problem.

If this trend continues, the reliance on massive real-world datasets could decrease. Synthetic data may not replace reality entirely, but it will likely become a core part of how models are trained.

Why Understanding AI Matters as Much as Building It

At the same time, another problem is becoming impossible to ignore. As AI systems grow more complex, understanding what they are doing becomes harder.

Modern AI is moving toward agents. These are systems that plan, execute tasks, and interact with tools across multiple steps. When something goes wrong, the output is not a simple error. It is a tangled chain of actions, logs, and decisions.

This makes debugging incredibly difficult.

The Rise of Tools That Explain AI Behavior

To deal with this, new tools are emerging that focus on visibility. Instead of raw logs, they present a structured timeline of what the AI did, step by step.

This changes how developers interact with these systems. They can trace decisions, identify failures, and understand behavior in a way that was previously hidden. It turns debugging from guesswork into analysis.

And as AI shifts toward long-running workflows, this kind of visibility becomes essential.