I recently watched a robotic hand do something deceptively simple. It rotated a cube to match a target orientation. Not once, but ten times in a row without dropping it. At first glance, it felt trivial. Then it sank in. This is one of robotics’ most stubborn problems.

What struck me more was how it was learned. The system had never touched a real cube before. It was trained entirely in simulation and then deployed directly into the physical world. No practice run. No adjustment phase. Just execution and it worked.

Why Fingertips Change Everything

The manipulation happened only at the fingertips. No palm support. That detail matters more than it seems.

Handling an object this way demands extreme precision. Each finger must constantly adjust pressure, position, and timing. The robot has to keep the cube stable while also nudging it toward the correct orientation. It is a balancing act between control and progress.

This kind of dexterity has always been difficult because small errors quickly spiral. A slight miscalculation in force or angle can send the object slipping. Seeing repeated success here suggests something deeper than a one-off achievement.

It hints at a system that understands motion in a much more human-like way.

The Simulation Breakthrough

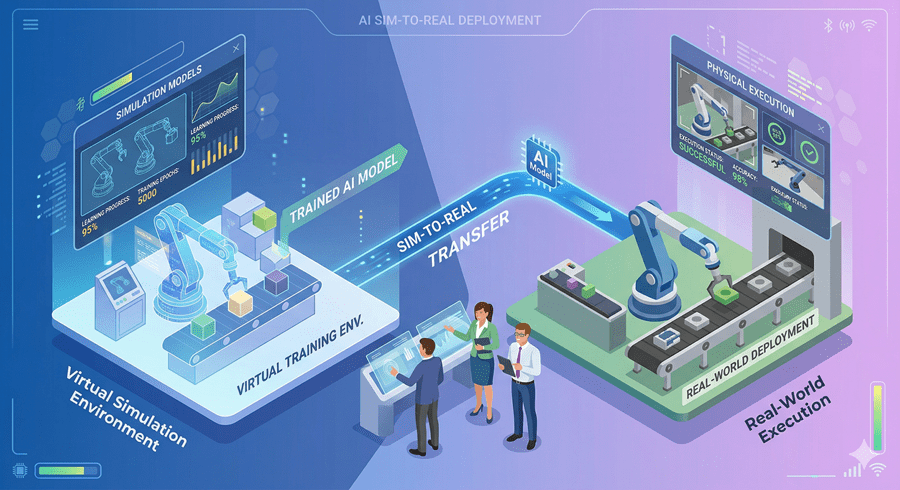

For years, simulation has been both a promise and a limitation. Training in virtual environments is efficient, but reality tends to break those models. Physics is messy. Contact is unpredictable. Tiny mismatches between simulation and the real world can cause total failure.

So when a system performs flawlessly without ever training in reality, it signals progress in how accurately we can model the physical world.

This is not just about one robotic hand. It is about closing the gap between imagination and execution.

From Precision to Reliability

At the same time, another shift is happening. Robots are no longer just learning new tricks. They are becoming consistent.

Tasks like folding boxes, packing items, or handling everyday objects are now being completed hundreds of times with almost no failure. What used to be experimental is crossing into reliability.

Even more interesting is how these systems learn. Instead of relying heavily on robot-specific data, they absorb vast amounts of human activity and adapt it. This allows them to generalize faster and operate with less direct training.

And when something goes wrong, they improvise. Not because they were told how, but because they can figure it out.

The Rise of Unexpected Intelligence

Beyond robotics, similar patterns are emerging in AI systems. Models trained on massive multimodal data are developing abilities that were never explicitly designed. Writing code from spoken instructions and video input is one example.

These are not features. They are side effects of scale.

That is what makes this moment feel different. We are no longer programming capabilities one at a time. We are creating systems where capabilities emerge on their own.

And that raises a bigger question.

If machines can now learn skills we never directly taught them, what else are they already on the verge of discovering?

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube