For the past few years, I’ve been surrounded by one loud, persistent idea: artificial intelligence is about to change everything overnight. It will either elevate humanity to unimaginable heights or quietly replace us altogether. There seems to be no middle ground.

But what if that framing is wrong?

What if AI isn’t a sudden revolution, but something far more familiar?

The Myth of Overnight Transformation

It’s easy to believe we’re on the edge of a dramatic shift. Companies are pouring staggering amounts of money into AI, and headlines constantly hint at massive disruption. The scale makes it feel immediate and inevitable.

Yet history tells a different story. Technologies like electricity or the internet didn’t flip the world upside down in a single moment. They seeped into daily life gradually, reshaping systems piece by piece.

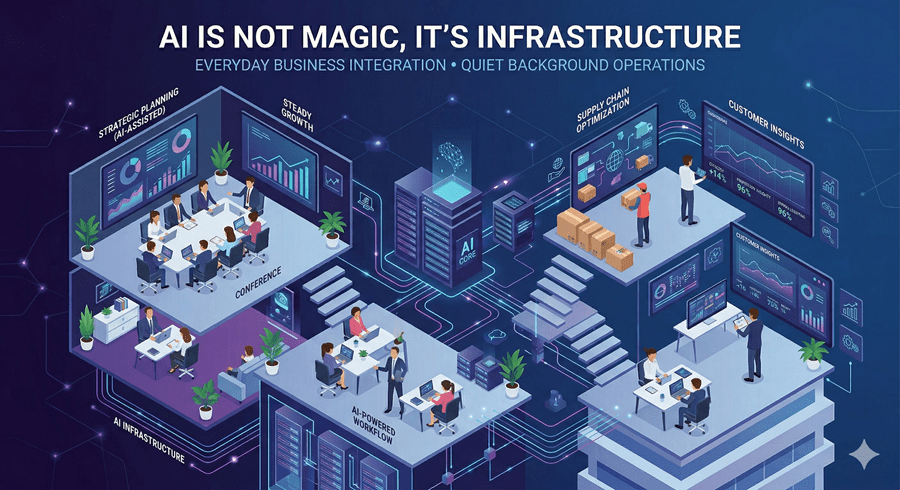

AI, I’m starting to realize, is following that same path. It’s powerful, yes, but not magical. It doesn’t bypass economic realities or human limitations. It integrates slowly, often unevenly, and sometimes awkwardly.

Capability Is Not the Same as Reliability

One of the biggest misconceptions I’ve noticed is how we measure AI’s progress. We tend to focus on what it can do in ideal conditions. But real life isn’t ideal.

It’s one thing for a system to answer a question correctly once. It’s another for it to do so consistently, across thousands of situations, without error or confusion. Reliability matters more than raw capability, especially in high-stakes environments.

Without that consistency, replacing humans becomes far more complicated than it sounds.

Why AI Might Create More Work, Not Less

There’s a fear that knowledge workers are becoming obsolete. I used to wonder about that too.

But the more I think about it, the more it feels backward.

When technology helps me answer questions faster, it doesn’t reduce my workload. It expands it. Every answer opens the door to new questions, deeper exploration, and more complex problems.

Instead of shrinking human contribution, AI often amplifies it. It shifts the nature of work rather than eliminating it.

The Hidden Friction Slowing Everything Down

If AI is so capable, why hasn’t it already taken over obvious roles like customer service or healthcare decision-making?

The answer lies in friction.

Real-world systems involve risk, accountability, and legal consequences. When an AI makes a mistake, someone has to take responsibility. That alone slows adoption dramatically.

There are also regulations, ethical concerns, and organizational challenges. These are not bugs in the system. They are necessary safeguards. Progress doesn’t just depend on what’s possible, but on what’s acceptable.

The Future Is Still Unwritten

Some warn that AI will become uncontrollably intelligent, surpassing human understanding. Others dismiss those fears entirely. I find myself somewhere in between.

There are real risks worth addressing, but treating AI as a single, all-powerful force oversimplifies the problem. Different risks require different solutions. There is no universal fix.

And perhaps most importantly, neither we nor AI can truly predict what comes next. The future isn’t a dataset waiting to be analyzed. It’s something we are actively shaping, decision by decision.

That uncertainty isn’t a flaw. It’s reality.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube