I’ve seen plenty of updates that promise more than they deliver. This one is different. OpenClaw 4.1 doesn’t just add features. It fixes the friction that made running AI agents feel unreliable in real workflows.

If you’re using agents for business, this release is less about innovation and more about trust.

Finally, Real Visibility Into Your Agents

Before this update, running multiple agents felt like guesswork. I’d launch tasks and hope everything worked, only to discover failures later when something downstream broke.

Now, there’s a built-in task board that shows everything in real time. What’s running, what finished, and what failed are all visible in one place.

That single change shifts how I manage automation. I spend less time checking logs and more time actually improving workflows. It turns agents from something I monitor into something I oversee.

Failover That Actually Works

Failover used to exist in theory but not in practice. When a model hit limits, agents would keep retrying the same provider instead of switching.

Now, retries are capped, and rotation between providers is controlled. If one model stalls, the system moves on cleanly.

This matters more than it sounds. Overnight workflows stop breaking silently. Tasks don’t get stuck in loops. Reliability goes up without extra effort.

Smarter Web Search and Data Control

Web search is no longer a fragile add-on. With a built-in, configurable search system, I can control how agents gather information.

That means less dependence on external APIs and more control over cost and data. For research, content, or monitoring tasks, this is a meaningful upgrade.

It also makes workflows more private and predictable, which is critical when working with client data.

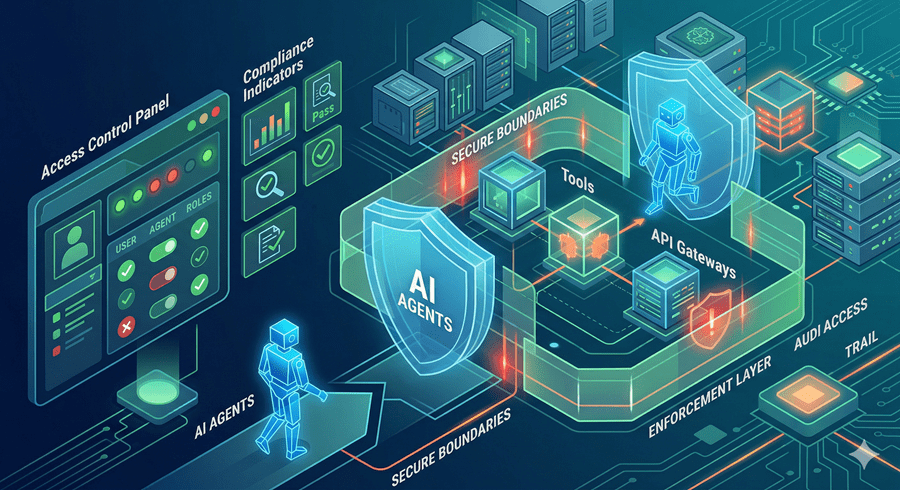

Better Security and Tighter Control

One of the most underrated improvements is how much control I now have over what agents can do.

Scheduled tasks can be restricted to only the tools they actually need. That reduces unnecessary API calls and limits risk. If something goes wrong, it stays contained.

There’s also deeper integration with infrastructure-level safeguards, making it easier to enforce content rules and maintain compliance without manual oversight.

Small Changes That Make a Big Difference

Some updates seem minor, but completely change the experience.

Voice activation makes interaction feel natural. Model switching no longer interrupts active tasks. Memory persistence works properly across restarts.

On top of that, dozens of stability fixes quietly remove the bugs that used to cause random failures, stalled processes, and broken executions.

Individually, these changes are small. Together, they make the system feel dependable.

What stands out most to me is this: OpenClaw 4.1 closes the gap between experimentation and real-world usage.

Running AI agents is no longer just about what’s possible. It’s about what actually works, consistently, at scale. And right now, that gap is where the real advantage is.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube