For a long time, I believed the hierarchy in artificial intelligence was settled. One model stood clearly above the rest, shaping how developers built, how businesses operated, and how researchers explored possibilities. Then something unexpected happened. A new contender emerged without noise, without spectacle, but with results that were impossible to ignore.

A Shift Hidden in Plain Sight

When Claude 3 arrived, it didn’t try to dominate attention. Instead, it focused on capability. And that choice made all the difference. While most people were still anchored to old assumptions, the numbers began telling a different story.

Across complex reasoning tasks, math challenges, and coding benchmarks, Claude 3 showed clear and often significant improvements. These weren’t marginal gains. They represented a real leap in how well an AI system could think through problems and deliver accurate results.

What struck me most was not just that it performed better, but how consistently it did so across different domains.

Why Benchmarks Suddenly Matter Again

Benchmarks often feel abstract, but this time they reveal something meaningful. Claude 3 doesn’t just process information faster or more efficiently. It understands context at a deeper level.

One example that stood out to me was its ability to detect when a test itself was artificial. That level of awareness goes beyond pattern recognition. It hints at a more refined understanding of intent and structure.

Then there’s the context window. Being able to handle massive amounts of information at once changes everything. Instead of breaking problems into smaller pieces, I can now think in terms of complete systems, full documents, or entire datasets. That shift alone redefines what feels possible.

The Power of Quiet Design Choices

What makes this evolution even more interesting is the philosophy behind it. Instead of racing to release features, the focus was on building something reliable, honest, and safe.

This approach shows up in subtle but important ways. The model is less likely to fabricate answers and more willing to admit uncertainty. That might sound small, but in practice, it builds trust. And trust is what turns a useful tool into a dependable one.

I’ve noticed that responses feel more natural, too. There’s a clarity and flow that makes interactions smoother, especially in writing and communication tasks.

Where It Actually Changes Work

The real impact isn’t in test scores. It’s in how work gets done.

With a larger context window, I can analyze entire codebases, review long research papers, or synthesize multiple sources without losing track of the bigger picture. Tasks that once required careful segmentation now feel seamless.

For anyone working across languages or managing global content, the improvements are even more noticeable. Consistency and accuracy across different contexts become a real advantage, not just a theoretical one.

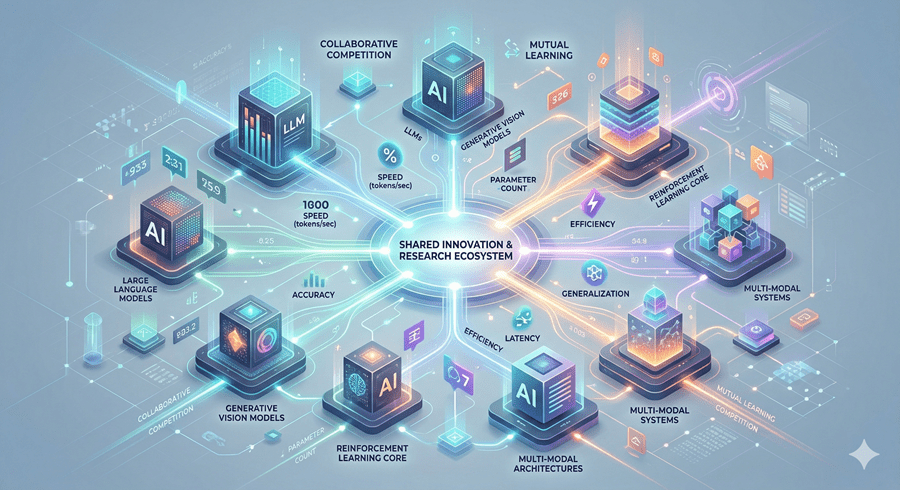

A New Kind of Competition

What’s happening here isn’t just about one model outperforming another. It’s about the landscape becoming genuinely competitive.

For the first time in a while, I feel like the choice of AI tools actually matters again. It’s no longer about defaulting to a single option. It’s about evaluating what truly works best for the task at hand.

And maybe that’s the most important shift of all. The biggest changes don’t always arrive with noise. Sometimes they unfold quietly, reshaping expectations one result at a time.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube