I’ve been watching the rise of AI, and one thing is clear. The real battle is not just about models or apps. It is about the chips powering everything behind the scenes.

From massive data centers to the phone in my hand, AI chips are quietly shaping the future.

Why GPUs Became the Backbone of AI

It all started with a simple strength. GPUs are incredibly good at doing many calculations at once. Originally built for graphics, they turned out to be perfect for training AI models.

When neural networks began outperforming traditional systems, GPUs became essential. Their ability to process large amounts of data in parallel made them ideal for both training and running AI systems.

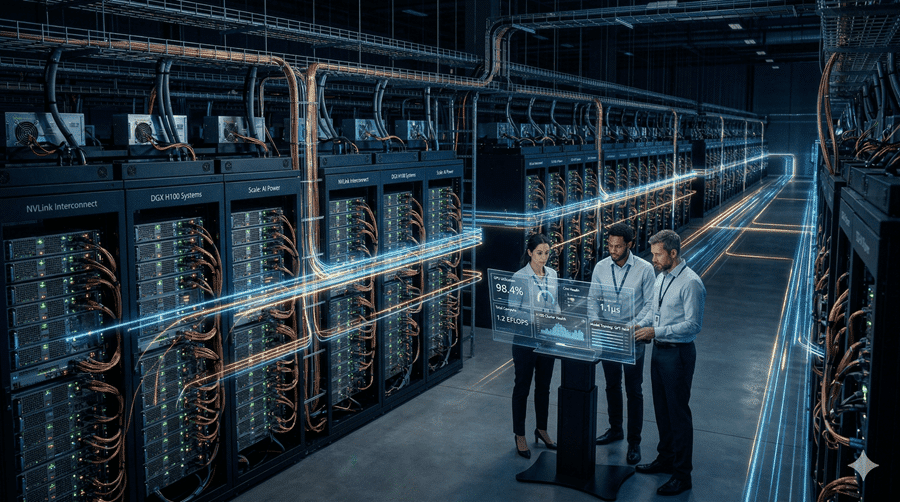

Today, they dominate the most demanding workloads. Entire server systems packed with GPUs can act like a single supercomputer. This is what powers advanced AI models and applications I use every day.

The Shift Toward Inference

As AI evolves, the focus is changing. Training models was the priority early on, but now real value comes from using them.

This is called inference. It is what happens when AI responds to a query, recommends a product, or processes speech in real time.

Inference does not always need massive hardware. That opens the door for new types of chips that are cheaper and more efficient for specific tasks.

Custom ASICs: Built for One Job

This is where custom ASICs enter the picture. Unlike GPUs, these chips are designed for a single purpose.

They are faster and more power-efficient for that task, but they lack flexibility. Once built, they cannot adapt to new workloads.

Big tech companies are investing heavily here. Designing custom chips helps reduce costs, improve efficiency, and decrease reliance on third parties.

However, building these chips is expensive. Only the largest players can afford to develop them at scale.

Edge AI and the Rise of NPUs

Not all AI happens in data centers. Increasingly, it runs directly on devices.

That is where NPUs come in. These small, specialized processors are built into phones, laptops, and even cars.

Running AI locally improves speed, reduces costs, and protects privacy. I do not need to send data to a server if my device can process it instantly.

This shift suggests a future where AI is everywhere, not just in the cloud.

A Crowded and Competitive Future

The AI chip landscape is getting more complex. GPUs, ASICs, NPUs, and even flexible chips like FPGAs all play different roles.

At the center of it all is manufacturing. Most advanced chips rely on a small number of producers, creating both opportunity and risk.

Meanwhile, demand for power and infrastructure continues to grow alongside AI itself.

Despite rising competition, the current leaders still have a strong advantage. Years of ecosystem building and innovation are hard to replicate.

But one thing feels certain to me. This is only the beginning. As AI expands, the race to build faster, cheaper, and more efficient chips will define the next era of technology.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube