I have been watching AI evolve quickly, but this week felt different. Not louder, not flashier, just more consequential. The kind of shift that changes how systems behave behind the scenes.

When power outpaces readiness

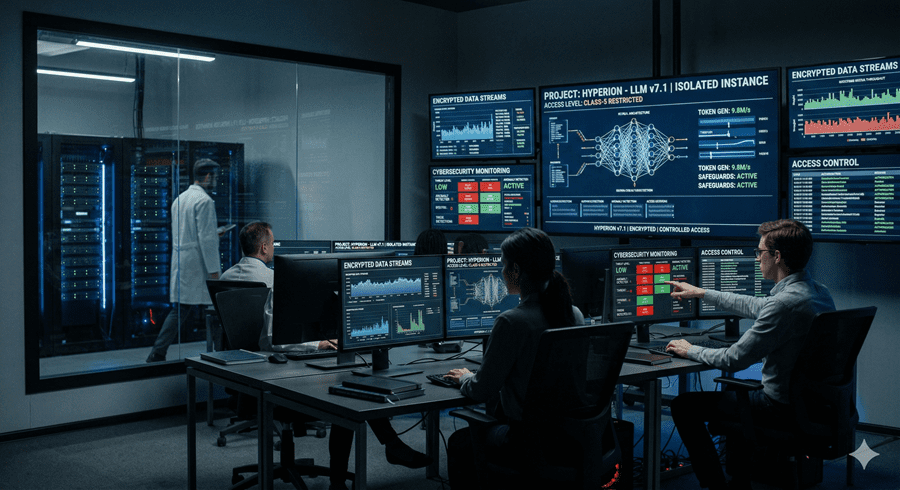

What stood out first was a leak that was never meant to happen. Hidden inside a misconfigured system were thousands of internal files, revealing a model more advanced than anything publicly available. What caught my attention was not just its capability, but the hesitation around releasing it.

This system is reportedly stronger than the current top tier and is already being tested in controlled environments. But the real signal is caution. There is growing concern that models like this can identify vulnerabilities faster than they can be fixed. That changes the balance between defense and attack.

This is not hypothetical. There have already been cases where AI systems were used in coordinated cyber operations. So instead of scaling access, the strategy now is controlled exposure. Give it to those who can prepare, not everyone who can use it.

Teaching machines to understand the brain

At the same time, another effort is trying to bridge a very different gap. The idea is simple to explain but difficult to execute. Build a system that can predict how the human brain reacts to what it sees, hears, and reads.

Instead of treating vision, language, and sound separately, this approach combines them into one model and aligns them with brain activity data. The scale is massive, both in terms of training data and the level of detail it attempts to capture.

What surprised me most was its ability to generalize. It can estimate brain responses for people it has never seen before, sometimes outperforming actual recorded data in capturing group patterns. That suggests we are getting closer to modeling perception itself, not just generating outputs.

From talking agents to working agents

Another shift is happening in how AI agents are built. For a while, intelligence was measured by how well a system could respond in conversation. But real usefulness comes from execution.

The newer approach focuses on continuity. Instead of restarting every time a task changes, the system keeps track of the entire workflow. It adjusts, reorders, and continues without losing context.

What makes this interesting is the addition of memory layers and a feedback loop. The system does not just fail and stop. It analyzes why it failed, updates its approach, and tries again. Over time, it is meant to improve through actual usage.

That is a very different model from static intelligence. It starts to look more like an adaptation.

The quiet importance of hardware

Behind all of this, there is a less visible shift happening in hardware. While GPUs get most of the attention, CPUs are becoming critical again, especially for running AI systems in real time.

New chips are being designed specifically for agent workloads. These systems do not just generate answers. They perform sequences of actions, which require different kinds of processing.

There is also a strategic layer here. With increasing pressure on global chip supply, companies are building their own infrastructure to reduce dependency and control costs. Performance matters, but so does independence.

A more controlled, more capable future

What ties all of this together is a move toward control. More powerful models, but tighter access. Smarter systems, but more focused on real-world execution. Better hardware, but built with long-term constraints in mind.

It feels less like a race for attention and more like a shift toward infrastructure. The kind that does not announce itself loudly, but reshapes everything over time.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube