I once faced a simple question that refused to stay simple. A runaway trolley was heading toward five people. I could divert it onto another track, where only one person stood. What should I do?

My instinct was immediate. Save five, sacrifice one. It felt rational, even humane. But that confidence didn’t last.

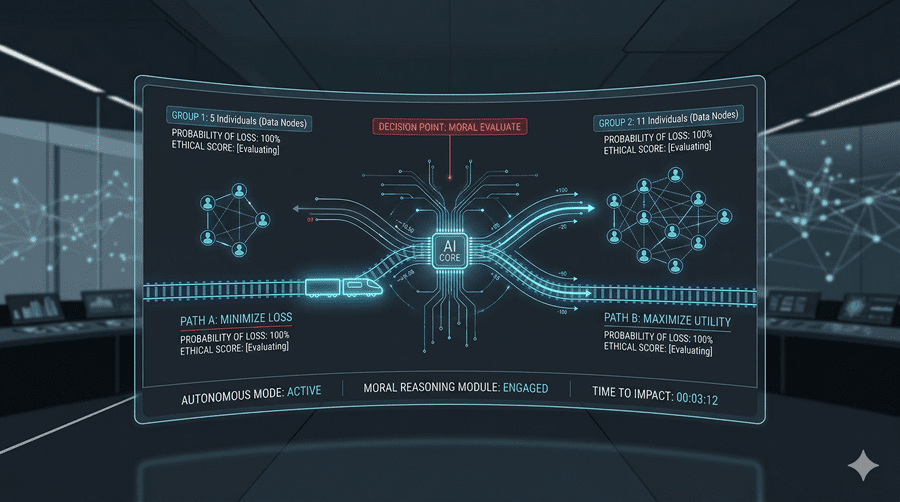

When Numbers Seem to Decide Everything

At first, morality looked like a math problem. Five lives are worth more than one. Choosing the greater number felt like the obvious moral path. This way of thinking focuses on outcomes. If an action leads to more overall happiness or less suffering, then it must be right.

This idea is deeply intuitive. In a crisis, we often justify difficult choices by pointing to the greater good. It feels practical, almost necessary.

But then the situation changed.

The Moment Intuition Breaks

Instead of pulling a lever, I imagined standing on a bridge above the tracks. This time, I could stop the trolley only by pushing a man onto the track. He would die, but the five would live.

Suddenly, I hesitated.

The numbers hadn’t changed, yet everything felt different. Pushing someone to their death felt like a line I could not cross. The outcome was the same, but the action itself felt wrong.

That contradiction unsettled me. If saving five lives justified one death before, why not now?

Actions Versus Outcomes

I began to notice a tension in how I reasoned. Sometimes I judged actions by their results. Other times, I judged them by what they were, regardless of the outcome.

Actively harming someone felt fundamentally different from redirecting harm. Even if both choices led to one death, one felt like killing, the other like choosing between unavoidable outcomes.

This revealed two competing moral instincts. One asks, “What produces the best result?” The other asks, “What kind of action is this?”

When Survival Challenges Morality

Then came a harsher scenario. Stranded at sea with no food, a group faced starvation. One person was weak, unlikely to survive. The others chose to kill and eat him to stay alive.

Here, the question became even more uncomfortable. Does extreme necessity justify taking a life? Is survival enough to excuse what would otherwise be unthinkable?

Some part of me understood the desperation. Another part resisted completely. Even in extreme conditions, it felt dangerous to allow people to decide whose life mattered less.

Why Philosophy Refuses Easy Answers

What unsettled me most was not the answers, but the process itself. Each new scenario forced me to rethink what I believed just moments earlier.

I realized that moral thinking is not about memorizing rules. It is about confronting contradictions within myself. The more I examined my beliefs, the less certain they became.

And yet, avoiding these questions is not an option. Every day, in small and large ways, I live out answers to them, whether I realize it or not.

That is the real challenge. Once I begin to question what feels obvious, I cannot return to simple certainty. What once felt clear becomes complicated, and that discomfort is impossible to ignore.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube