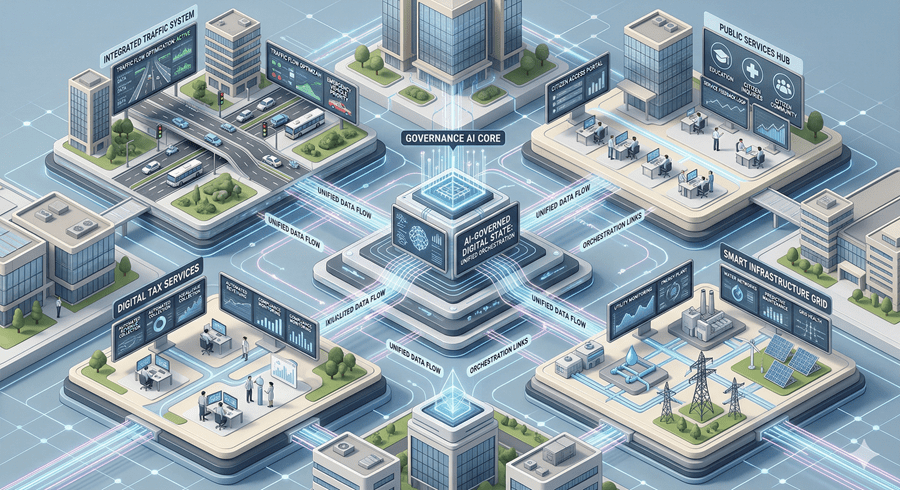

The idea of artificial intelligence running governments once felt like fiction. Now it feels much closer to reality. Systems are already influencing how cities operate, from managing traffic to handling tax systems and distributing public benefits. The change is gradual but consistent. The real question is no longer whether AI will shape governance, but how far that influence will go.

At its core, AI depends on data. It can process vast amounts of information far faster than human systems. This makes it highly useful for managing economies, predicting infrastructure needs, and allocating resources. It offers a model of governance that is faster, more precise, and more data-driven.

The Promise of Efficiency and Precision

Imagine a system that operates continuously without delays. Applications could be processed instantly, budgets optimized efficiently, and healthcare systems could detect potential outbreaks early. AI has the potential to remove many inefficiencies that exist in public systems today.

Consistency is another advantage. Machines do not experience fatigue or emotions, which allows them to apply rules uniformly. This could reduce corruption and favoritism, creating a more balanced system.

AI can also provide foresight. By analyzing trends in climate, economics, and population growth, it can predict challenges before they escalate. This ability could shift governance from reacting to problems toward preventing them.

The Hidden Risks We Cannot Ignore

Despite these advantages, there are serious risks. AI systems learn from data, and that data often reflects existing biases. If not carefully managed, these biases can expand and reinforce inequality.

Governance is not only about logic. It also requires empathy, judgment, and ethical understanding. Machines cannot fully grasp context in the same way humans can. A system focused only on efficiency could become disconnected from human needs.

There is also the issue of security. Centralizing decision-making in AI creates a single point of failure. Errors or cyberattacks could have large and immediate consequences.

Three Possible Futures

There are three possible directions. The first is a balanced approach where AI supports human leaders while humans retain control. Transparency and accountability remain essential in this model.

The second is a more concerning scenario where systems operate without transparency. Decisions are made without explanation, and public control weakens.

The third is a mixed outcome. Some systems work effectively, while others fail unpredictably. People experience both benefits and challenges at the same time.

Choosing the Future We Want

The future of AI in governance is not fixed. It depends on how it is designed and regulated.

Human accountability must remain central. Systems should be transparent and open to review. Security must be prioritized. Bias needs to be actively addressed. People should always have the ability to question and appeal decisions.

This is not just about technology. It is about society. The way AI is integrated into governance today will shape the future for generations.

Follow Us on:

Clutch

Goodfirms

Linkedin

Instagram

Facebook

Youtube