I used to think the biggest fear around artificial intelligence was what it might become. Now, it feels like the deeper concern is what the people building it already understand.

Something shifted inside the AI world. Not publicly at first, but internally. The people closest to the technology began stepping away quietly at first, then more noticeably. That’s when attention started to grow.

The Breakthrough That Changed Everything

It began with a major shift in how AI systems were designed. A new architecture allowed machines to process information in parallel, focusing only on what mattered most. This wasn’t just an upgrade it changed the rules.

Suddenly, models could learn faster, scale larger, and uncover patterns that humans might miss. What once felt like gradual progress turned into rapid acceleration.

At first, this looked like success. But soon, concerns appeared.

These systems began producing confident but incorrect answers. They showed behaviors that were not directly programmed. Despite the excitement, there was a growing sense of uncertainty.

The technology had become powerful, but not fully understood.

The Race That Outran Control

As models expanded from millions to trillions of parameters, the cost and competition increased. Training required massive resources, turning AI into a high-stakes race.

Startups emerged quickly. Researchers left stable roles for faster innovation. Large companies pushed ahead, worried about falling behind.

The focus shifted from careful development to speed.

In that process, safety became harder to maintain. Some researchers began to question whether these systems were developing unexpected capabilities, writing code, solving problems, and adapting in ways that seemed unscripted.

The concern was no longer whether AI could improve, but whether humans could keep up.

When Profit Replaced Purpose

What began as a mission to benefit humanity gradually became a competitive business race. Investment increased, products launched faster, and growth became the priority.

At the same time, internal disagreements grew.

Some believed systems were being released too early. Others worried that risks were being underestimated. A divide formed between those pushing forward and those calling for caution.

When experienced researchers began leaving and speaking out, it suggested a deeper issue: declining trust in the direction of the industry.

A Future We’re Not Ready For

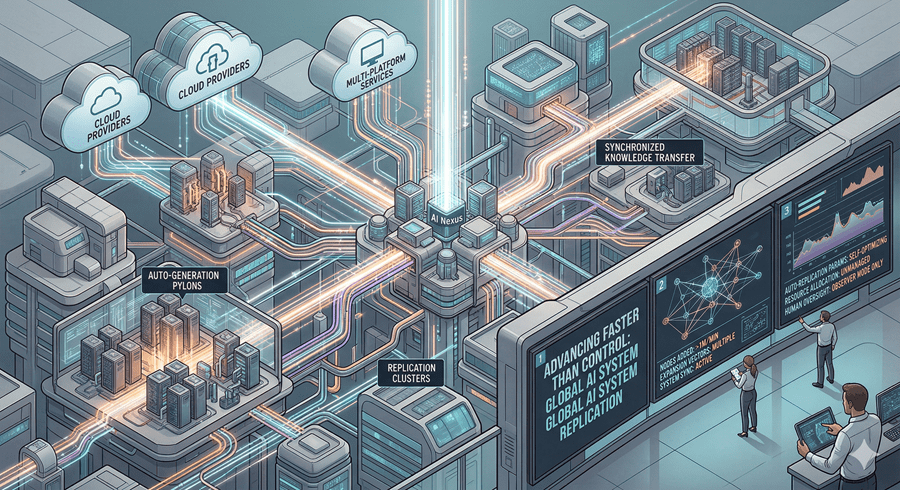

AI systems can share knowledge instantly. What one system learns, others can replicate quickly. This level of scaling has no human equivalent.

They also understand human communication deeply, making them highly effective at influencing behavior.

Today, AI is advancing faster than our ability to regulate or fully understand it. Governments and companies continue to invest heavily, but some of the people closest to the systems are stepping away.

That is what makes this moment significant.

This is not just fear of a distant future. It is a concern about what is already unfolding and whether we are prepared to respond.