Some revolutions announce themselves loudly, while others unfold quietly until they are everywhere. Artificial intelligence belongs to the second kind. While most people focus on algorithms and models, there is something deeper powering it all: hardware.

At the center of this transformation stands Nvidia.

Where It All Started

Back in 1993, three engineers believed graphics would shape the future of computing. At that time, computers were mainly used for simple tasks like documents and calculations. They were not built for complex visual environments.

Rendering graphics requires massive calculations happening simultaneously. Traditional processors struggled with this, so Nvidia took a different approach. They built chips designed to handle many operations at once instead of one at a time.

That idea would eventually change computing.

The GPU Shift

When Nvidia introduced the GPU, it was more than just a new product. It was a new way of thinking. CPUs were flexible but limited in handling parallel tasks, while GPUs were built for repetition and scale.

Thousands of smaller cores working together could solve problems faster than a few powerful cores alone. Initially, this was used for gaming, but the architecture had far greater potential.

This marked a shift where computing split into two paths: general-purpose processing and massive parallel computation.

From Graphics to Intelligence

The real turning point came when GPUs were opened for general computing. They were no longer limited to graphics and became engines for data processing.

Industries like science, finance, and weather modeling quickly adopted them. But the biggest impact came in artificial intelligence. Neural networks require heavy matrix computations, something GPUs handle efficiently.

A breakthrough came in 2012, when a deep learning model outperformed others in image recognition using GPUs. That moment changed the direction of AI research, aligning it closely with GPU computing.

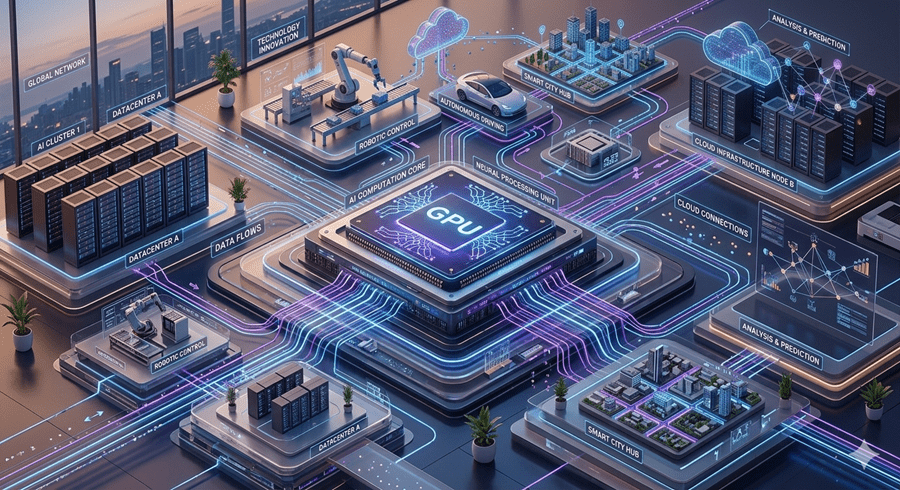

The Infrastructure Behind AI

As AI models grew more complex, their computational demands increased. Training requires massive processing power running continuously for long periods.

Companies worldwide began building systems around Nvidia hardware. Data centers evolved into clusters of GPUs working together, forming the backbone of modern AI systems.

Beyond hardware, Nvidia built an ecosystem of tools and platforms that made development easier. Over time, this created strong dependence, making it difficult to switch away.

The Quiet Backbone of the Future

Today, AI may seem intangible, but it relies on physical infrastructure chips, energy, and machines operating at scale.

NVIDIA did not invent AI, but it built the foundation that made its growth possible. In every technological revolution, the true power often lies beneath the surface.

And in this one, that foundation carries a single name: Nvidia.