For years, artificial intelligence has evolved from simple tools into systems that can write, code, and reason. Models like ChatGPT from OpenAI and Gemini from Google have pushed boundaries in ways that once seemed impossible.

But there is a fundamental limitation many people overlook.

These systems do not actually experience the world. They predict language.

That distinction is more important than it first appears.

The Limits of Language Models

Large language models learn by analyzing massive amounts of text. They become extremely skilled at predicting the next word in a sequence, capturing patterns across billions of examples.

That is why they can explain complex ideas, generate code, and even write poetry.

However, when asked what happens if a glass is dropped, the model describes the outcome rather than simulating gravity, motion, or impact. It relies on learned patterns instead of real-world understanding.

For conversation, this works extremely well. But for interacting with the real world, it is not enough.

What World Models Change

This is where world models come in.

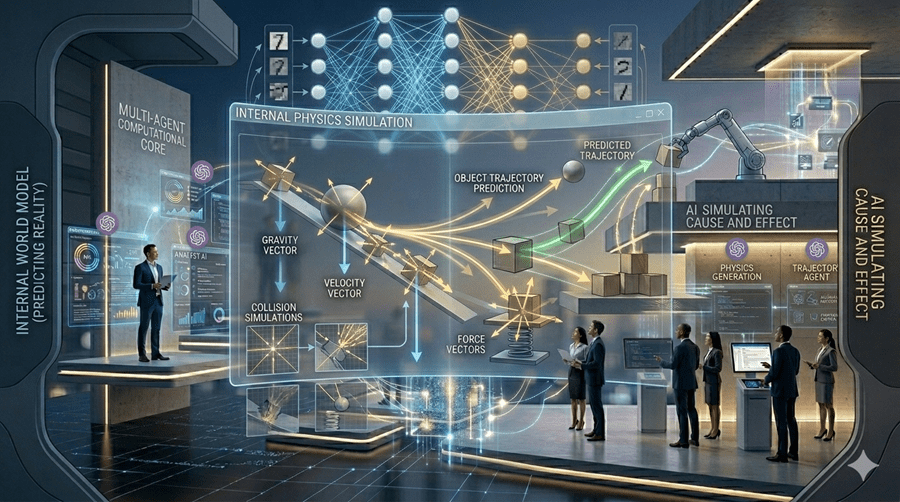

A world model does not just predict words. It predicts what happens next in an environment. It builds an internal simulation of cause and effect.

If an AI moves an object, it predicts the resulting motion. If it changes direction, it predicts how its perspective will shift.

In simple terms, it imagines outcomes before acting.

Humans do this naturally in everyday situations, often without realizing it. World models aim to give machines a similar capability.

Learning to Simulate Reality

Researchers, including teams at DeepMind, have been exploring systems that learn internal representations of the world.

In controlled environments, AI agents begin predicting future states instead of relying only on trial and error. Some researchers describe this as a basic form of machine imagination.

Instead of blindly trying actions, the system simulates possible outcomes internally and chooses better strategies.

It is the difference between guessing and planning.

Why This Matters

This shift moves AI from pattern recognition toward reasoning about reality.

It opens the possibility of systems that can control robots, drive vehicles, design structures, and simulate experiments before they happen. Early signs of this direction can be seen in systems like Sora, which generate consistent scenes over time.

That is not just content generation. It reflects an understanding of how the world behaves.

AI is moving from reacting to prompts toward anticipating outcomes.

If language models gave AI a voice, world models may give it something far more powerful the ability to imagine what happens next.