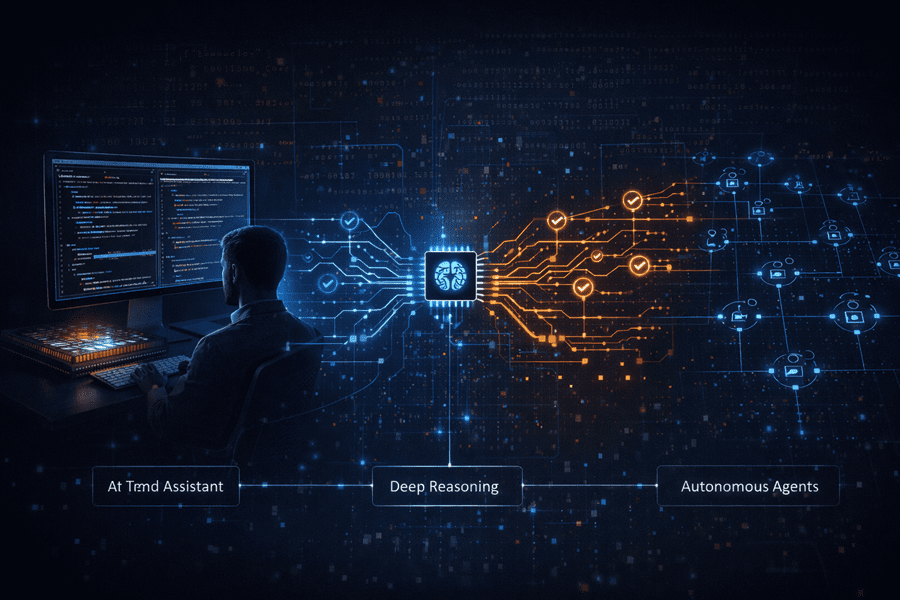

The AI landscape has shifted again. Within a short period, three major advances appeared from different corners of the industry, and together they suggest a new direction for how software may be built in the near future.

Each breakthrough focuses on a different priority: speed, reasoning depth, and cost. Interestingly, each one also comes from a different company pushing AI development in its own direction. What makes this moment important is not just a single model, but how these approaches could combine to shape a new development workflow.

Real-Time Coding With Lightning Speed

The first shift comes from OpenAI with a system called GPT‑5.3 Codex Spark.

This model is designed for a very specific purpose: real-time coding. Instead of focusing on extremely complex reasoning tasks, Spark is optimized to respond almost instantly while developers write code. There is a big difference between asking an AI to build an entire application and asking it to refactor a function or adjust a user interface. In those situations, speed matters more than raw intelligence.

To achieve this responsiveness, Spark runs on specialized hardware from Cerebras Systems using wafer-scale engine architecture. The goal is to reduce delays so developers can stay in their coding flow. Although it sacrifices some benchmark strength compared with larger models, the trade-off creates a faster and smoother development experience.

Deep Reasoning for Hard Problems

While OpenAI focuses on speed, Google DeepMind is pushing in the opposite direction with Gemini 3 DeepThink.

This system focuses on solving complex scientific and engineering problems. Instead of responding quickly, the model uses additional computation time to think through difficult tasks. Researchers describe this method as test-time compute, where the model checks its reasoning and explores multiple possible solutions before producing an answer.

This leads to stronger results on difficult benchmarks and tasks requiring structured logic. One notable demonstration shows the model turning simple sketches into 3D designs that can be prepared for printing, highlighting how reasoning systems can transform ideas into physical outputs.

The Rise of Always-On AI Agents

The third development focuses on cost. A model called MiniMax M2.5 from MiniMax aims to make autonomous AI agents affordable enough to run continuously.

Agents behave differently from normal chat models. They explore tasks, retry failures, use tools, and iterate repeatedly. If every run is expensive, the system becomes impractical. M2.5 reduces those costs so AI can stay active for long periods, performing research, coding, document processing, and other tasks without constant prompts.

Together, these three developments form an interesting triangle: fast assistants for daily coding, deep reasoning systems for complex problems, and low-cost agents working in the background. The next generation of software will likely rely on all three.