Scrolling through social media used to feel predictable. Photos from friends, funny clips, maybe a viral moment that everyone was talking about. Lately, though, something feels different.

More and more of what I see online looks real at first glance but carries a strange, unsettling vibe. A celebrity endorsing a product they have never used. A bizarre animal clip is doing something impossible. A dramatic security camera moment that feels just slightly off.

A growing share of the internet is now being generated by artificial intelligence.

The Rise of AI Content Everywhere

AI video tools have become incredibly accessible. Anyone with the right software can generate realistic clips, fake interviews, or entire scenes that look like they were captured by a camera.

These creations spread rapidly across platforms like Instagram, TikTok, Facebook, and X. Some are meant to entertain. Others exist purely to trick viewers.

Many people have started calling this flood of content AI slop. It refers to the endless stream of computer-generated images and videos filling social feeds.

Sometimes the content is obviously ridiculous. Other times, it is convincing enough to fool thousands of viewers before anyone questions it.

When Fake Feels Real

The biggest problem is not the obvious fakes. Those are easy to laugh at and move on from.

The real danger lies in believable content. A short clip of a heartwarming family moment. A police body camera scene. A celebrity speaking directly to the camera.

These videos trigger emotional reactions. They make people laugh, feel sympathy, or even get angry. But many of them are entirely fabricated.

Even public figures have become targets of deepfake videos used to promote products or spread misinformation.

And the unsettling truth is that most viewers cannot reliably tell the difference.

Clues Hidden in the Details

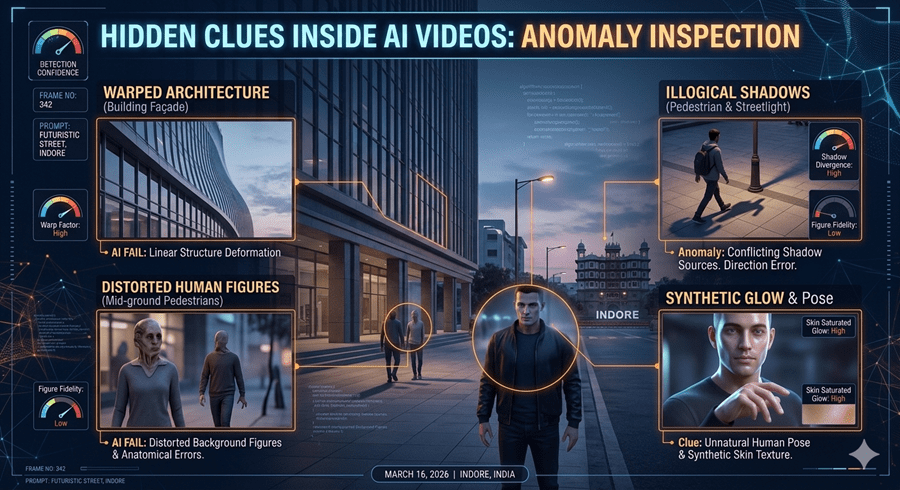

Despite how convincing AI videos can look, they often contain subtle clues.

One simple signal is the length of the clip. Many AI-generated videos are very short, often around ten to fifteen seconds. Creating longer, consistent footage still requires enormous computing power.

Another giveaway appears in small details. Background characters may behave strangely or move in unnatural ways. Signs and text may contain odd spelling errors or incomplete words.

Architecture can also reveal problems. Windows may lead to solid walls. Doors might open into space. The scene looks realistic until you study it closely.

The illusion begins to fall apart once you look beyond the main subject.

When Perfection Becomes Suspicious

Another strange pattern shows up in camera behavior.

AI-generated footage often looks too perfect. The framing is clean. The subject stays centered. The camera movement feels smooth and stable.

Real footage captured on phones or handheld cameras usually includes messy imperfections. Slight shaking, awkward framing, or video compression artifacts are common in authentic clips.

When a video looks unusually polished for a casual moment, it may deserve a second look.

Learning to Be Skeptical Again

The internet has always required a degree of skepticism. Photos can be edited, and stories can be misleading.

AI simply raises the stakes.

The most reliable defense is still common sense. Consider where the clip came from. Ask whether a trusted source shared it. Look for additional angles or reports confirming the same event.

Just because something appears realistic no longer means it actually happened.

And that simple realization might become one of the most important digital survival skills of the AI era.