Imagine finding a short script where someone is talking to an AI assistant, but the assistant’s replies have been ripped away. Now imagine having a machine that can guess the most sensible next word for any text you give it.

You could feed the script into the machine and let it predict the next word of the assistant’s response. Then repeat that process again and again until a full reply appears.

That simple idea is essentially how modern chatbots work.

A Machine That Predicts the Next Word

At its core, a large language model is a mathematical system designed to predict what word should come next in a sequence of text. Instead of choosing one word with certainty, it assigns probabilities to every possible next word.

When someone sends a prompt to a chatbot, the system analyzes the conversation so far and predicts what an AI assistant might say next. It selects one word, adds it to the text, and then repeats the process to generate a full response.

Interestingly, responses sound more natural when the model occasionally chooses less likely words. This small amount of randomness prevents answers from sounding repetitive.

That is why asking the same question twice can lead to slightly different responses.

Learning From Massive Amounts of Text

To learn how language works, these models are trained on enormous collections of written text. The scale is hard to imagine.

If a person tried to read the training data used for early large models nonstop, it would take thousands of years. Modern systems are trained on even larger datasets.

During training, the model repeatedly sees pieces of text where the final word is hidden. It must predict that missing word. If it guesses incorrectly, an algorithm slightly adjusts the model so the correct word becomes more likely next time.

This adjustment process is called backpropagation, and it happens trillions of times.

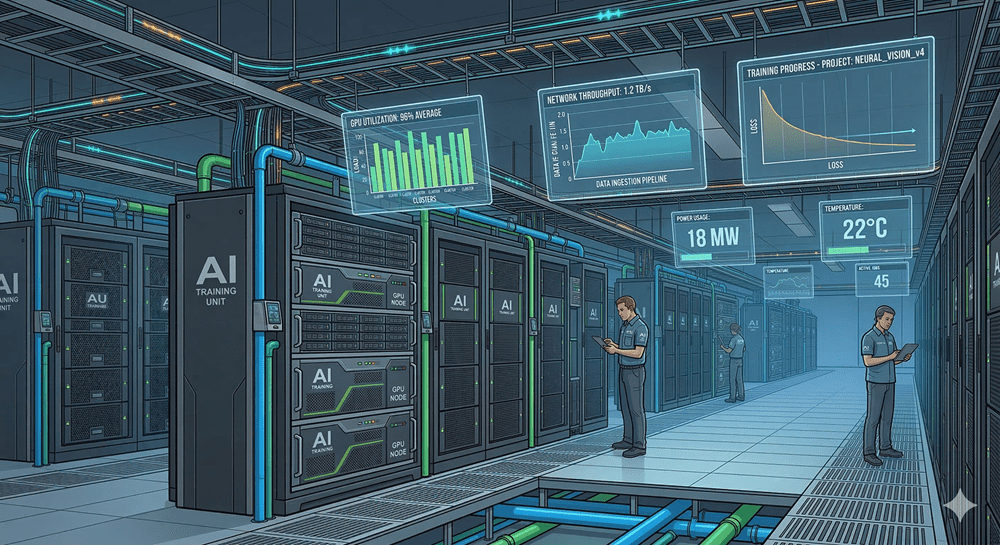

Why Training Requires So Much Power

The amount of computation required to train these models is enormous.

Even if a person could perform a billion calculations every second, finishing the operations required for training the largest language models would take more than one hundred million years.

Instead, companies rely on specialized hardware called GPUs. These chips are designed to run huge numbers of calculations simultaneously, making large-scale AI training possible.

The Breakthrough of Transformers

A major leap forward came with a model design known as the transformer. Earlier language models processed text word by word, which limited speed and efficiency.

Transformers changed that by allowing models to process entire passages at once. At the center of this design is a mechanism called attention.

Attention allows words in a sentence to influence each other based on context. For example, the meaning of the word “bank” changes depending on whether the surrounding text refers to finance or a river.

Turning Predictions Into Helpful AI

The first training stage teaches models to predict text. But predicting text alone does not make a helpful assistant.

To improve responses, another training stage uses human feedback. Reviewers evaluate answers, flag problems, and guide the model toward outputs people prefer.

What begins as a machine predicting the next word eventually becomes something much more useful: a system capable of answering questions, explaining ideas, and holding conversations that feel surprisingly natural.